Back to Daily Feed

A Visual Guide to Attention Variants in Modern LLMs

Must Read

Originally published on Ahead of AI by Sebastian Raschka

View Original Article

Share this article:

Summary & Key Takeaways

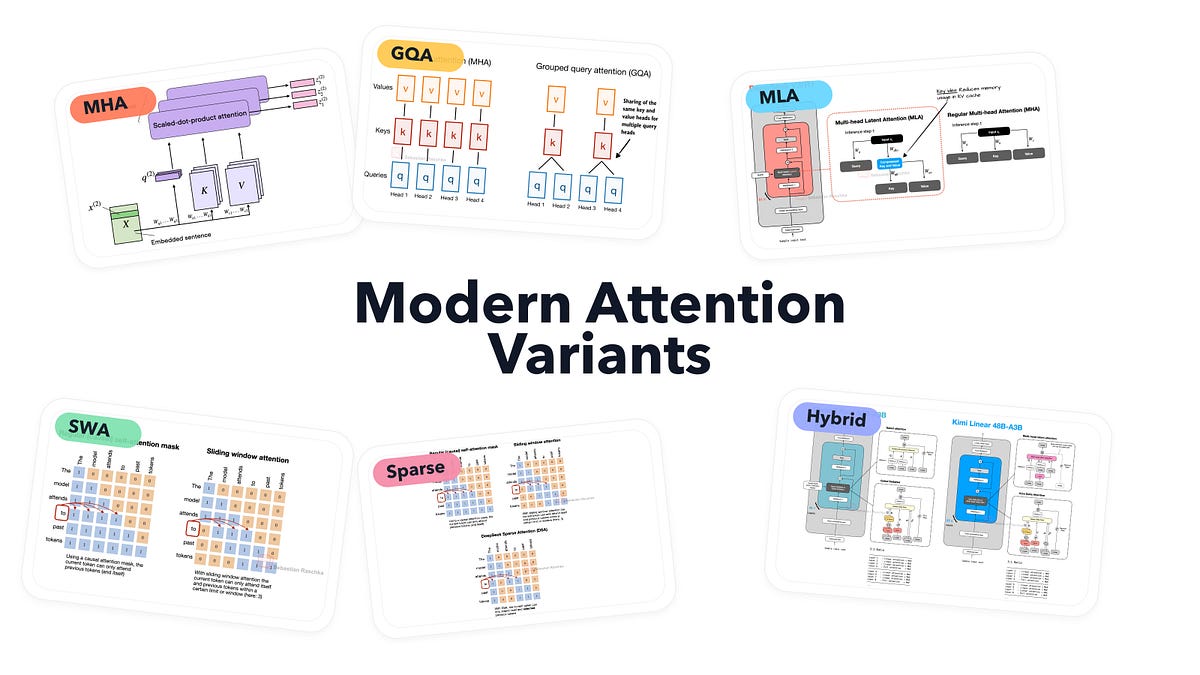

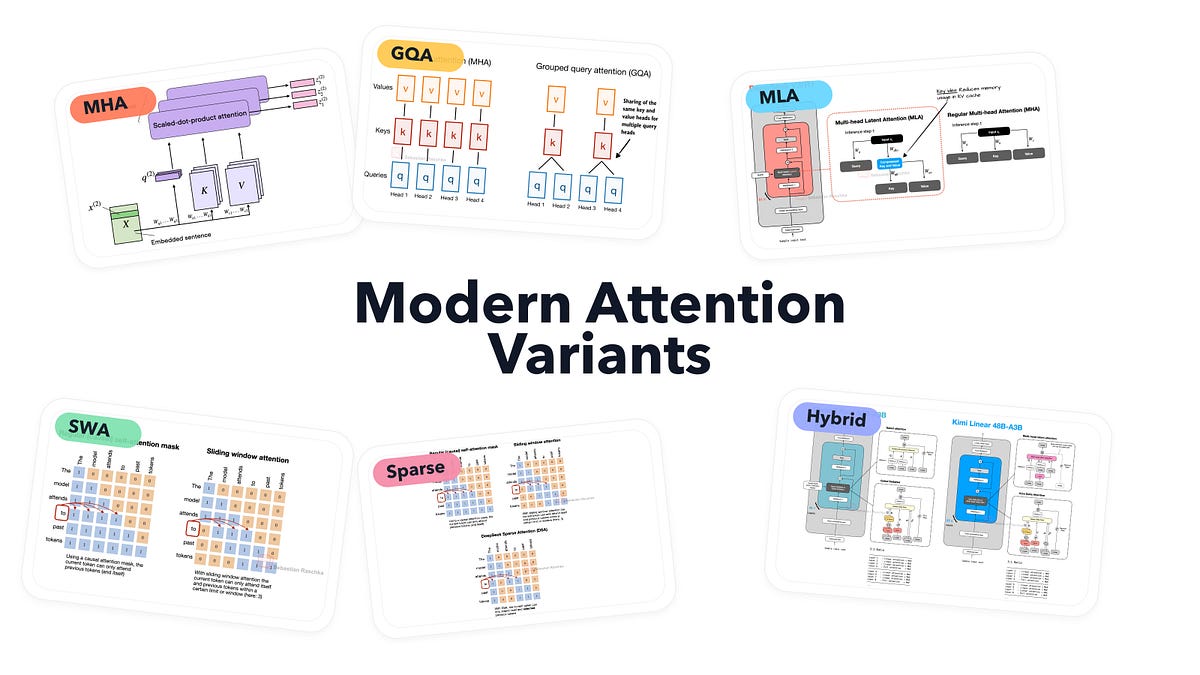

- Comprehensive Overview: The article offers a detailed visual exploration of the evolution and different types of attention mechanisms employed in modern Large Language Models (LLMs).

- Key Variants Covered: It breaks down concepts like Multi-Head Attention (MHA), Grouped-Query Attention (GQA), Multi-Query Attention (MLA), sparse attention, and various hybrid architectures.

- Visual Learning: Emphasizes visual explanations to demystify these complex components, making them more accessible to a broader audience interested in LLM internals.

- Practical Relevance: Understanding these variants is crucial for comprehending how LLMs process information, manage computational resources, and scale effectively.

Our Commentary

Understanding the internal mechanics of LLMs is becoming increasingly vital for anyone working with or even just curious about AI. This visual guide is a godsend for anyone trying to grasp the nuances of attention variants without getting lost in dense academic papers. The clarity provided here are exactly what we look for in educational content - that's why we marked this article as "Must Read"!

Share this article: